New Adobe Enhance Speech tool works AI miracles!

Having just used the new Enhance Speech tool I’m left wondering what sort of AI voodoo have Adobe employed this time. I actually stumbled across this new podcast tool as I was looking for ways to clean up an unintentionally roomy recording. In the past, I may have turned to de-verbing solutions such as Izotopes De-Reverb or SPLs De-verb. While these tools can be effective Adobe Enhance Speech claims to make bad recordings sound as if they were recorded in a professional studio through the powers of AI. A bold claim! Let’s delve in and see how the Adobe Enhance Speech AI performs in practice.

How to use it.

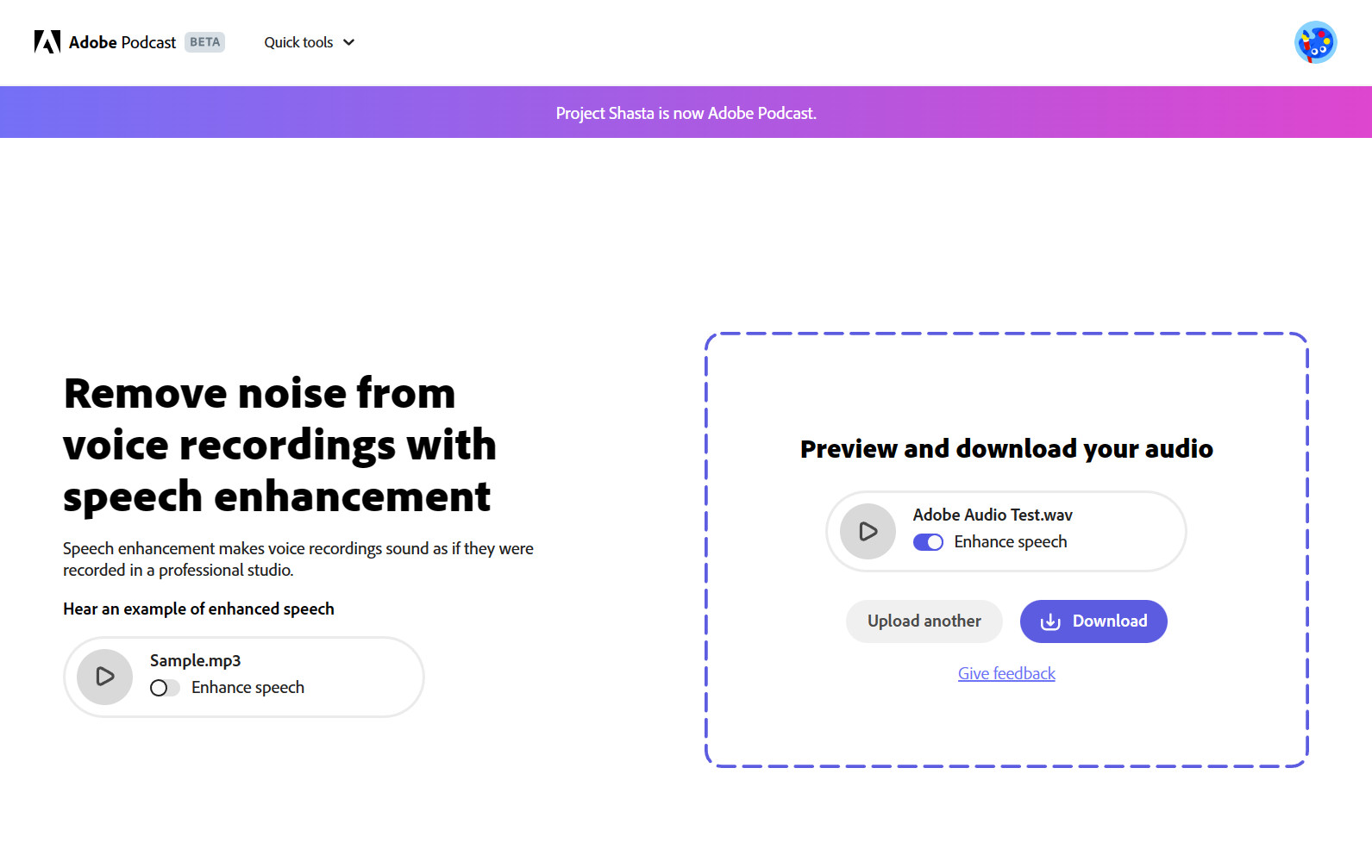

Adobe’s Enhance Speech is part of a comprehensive suite aimed at the podcast market. Originally called project Shasta, Adobe Podcast looks to dominate the world of podcasting in the same way it has done for design.

click here for more information about the impressive features of Adobe Podcast.

But we are here to discuss the Enhance Speech tool. This is the feature that is currently causing a big stir online. Adobe podcast is currently in beta testing and to use the full suite you must request access. However, In order to use the Enhance Speech tool you simply need to create a free Adobe account and you’re in.

The first thing you’re struck by is the lack of editable options (we will come to this in a bit) Simply drag and drop the audio that you want to process. You have the option of loading mp3 or wav audio files. If the files you want to enhance are not one of these formats fear not. RouteNote Convert will quickly transform almost any file into an mp3 or wav.

As soon as you drag the audio in It immediately starts processing. Depending on the length of the clip this can take a little while. If it’s a long clip then a good excuse to go make a coffee. Once it’s done you can preview the audio. There is even a switch to toggle between the original audio and the Adobe-enhanced version. If you’re happy with the result you can download the result. You also have the option to leave feedback which could be useful as Adobe Podcast is currently a beta project. But that’s pretty much it! It really is that simple!

How did it do?

I tested Adobe Enhance Speech in 3 situations. The results on the whole were night and day. This was especially the case with the poorer recordings. So, let’s check out my findings along with the associated audio clips.

Example 1:

The first example was in an outside setting with trees rustling in the wind. The results for this one were staggering. You still got a sense of being in an outside environment but the background ambience was now very low indeed. There are also minimal audio artefacts in the dialogue. In fact, Adobe Enhance Speech even did a fantastic job of repairing some rather nasty clipping.

Example 2:

The second example was recorded in an office environment. There was a subtle degree of hustle and bustle in the background. Think of a low murmur of conversation mixed with countless computer keyboard strikes. The mic used for the dialogue was a budget lapel mic. Again Adobe Enhance Speech performed impressively. The slightly harsh sound of the mic seemed to acquire a richer warmer tone. The background noise was almost none existent. If anything when married to the video footage the dialogue almost seemed unnatural and over-dubbed.

Example 3:

So far so good but when I tried out Enhance Speech on some less challenging dialogue it actually came slightly unstuck. Example 3 was recorded on an iPhone mic in a treated room (recording studio) at a distance of approx 150cm from the subjects. The dialogue, therefore, had some noticeable room ambience but nothing too troubling.

The result did successfully remove the room noise but in doing so some words and phrases contained a noticeable warble artefact. Certain words also sounded less defined and in some cases, there was a noticeable syllable dropout. This resulted in some of the passages sounding slightly robotic. This I found rather strange as it was certainly the least challenging audio to process. I even tried providing the audio at different volumes but this seemed to make no difference.

Test Conclusion

Judging by my findings and that of various YouTubers it seems that Adobe Enhance Speech produces some fairly random results. On the whole, it works wonders on less-than-perfect dialogue. I have been truly staggered by some of the examples! Unfortunately, the robotic effect that I noticed in the final example has slightly let it down. It is still in beta testing so hopefully they will be able to fine-tune it and improve the AI technology to prevent this.

I couldn’t help thinking that if there was the option to dial in the percentage of processing applied this would help considerably. I felt at times the effect, although impressive was maybe a tad too much and slightly over-processed. Therefore a little more control over certain parameters would be very welcome.

Summary

While Adobe is clearly targeting this suite of tools at the podcast market its potential is much wider. For example, this could be a really handy Production solution for cleaning up poorly recorded speech samples. Unfortunately from the few examples I have seen, this tool does not work particularly well on sung vocals. Rap vocals may fair slightly better and I certainly plan on testing this further down the line.

Where Adobe plan to take its podcast suite once it has left the beta-verse is open to speculation. Will they continue to offer these tools for free? Judging by Adobe’s previous form and propensity for subscription-based products I am personally doubtful. In fact, one of my favourite YouTube comments stated: The most mindblowing thing in this video is that Adobe released something for free

I would suggest if you work with a lot of dialogue then Adobe Speech Enhancer is definitely worth checking out for yourself.

Remember – RouteNote Create subscriptions start from as little as $2.99, and you also get 10 FREE credits to spend on samples when you sign-up as well as your FREE sample bundle!